At the start of 2014, attackers’ favorite distributed denial of service attack strategy was to send messages to misconfigured servers with a spoofed return address – the servers would keep trying to reply to those messages, allowing the attackers to magnify the impact of their traffic. As those servers got patched, this strategy became less and less effective. But now it’s back, according to a new report from Akamai. Except this time, instead of hitting data center servers or DNS servers, the attackers are going after personal computers on misconfigured home networks. According to Eric Kobrin, Akamai’s director of information security responsible for adversarial resilience, the attackers are taking advantage of plug-and-play protocols, commonly used by printers and other peripheral devices. These attacks, known as Simple Service Discovery Protocol (SSDP) attacks, are now the single largest attack vector for DDoS attacks, accounting for 21 percent of all attacks, up from 15 percent last quarter, and less than 1 percent at this time last year. “There are infectable SSDP services all over the Internet,” he said. “As they are discovered, we help work with people to shut them down.” Although each particular device has just a fraction of the bandwidth available to data center-based servers, there are more of them. “There’s a fertile ground of home systems,” he said. “A property configured home firewall can block this, but there are many improperly configured home systems connected to the Internet – and there are also industrial systems that can be used to reflect attacks as well.” This attack source is also harder to shut down, he said. “It’s easier to go into the data center and have the service providers do the clean-up,” he said. Last quarter, SYN flood attacks – where “synchronize” messages are sent to servers – was the leading attack vector, accounting for 17 percent of all attacks, down slightly from 18 percent of all attacks at the start of 2014. There has also been a change in the size of the median attack, and the typical size range of attacks, Kobrin said, as defensive measures have improved. “The smallest effective attack size has increased, year over year,” he said. “It’s because the smallest attacks are no longer effective.” Another type of DoS attack has gained a foothold for the first time this year. SQL injections, normally used to gain access to systems for the purpose of stealing data, are now being used to shut down Web sites as well. Akamai saw more than 52 million SQL injection attacks during the first quarter of 2015, which accounted for 29 percent of all Web application attacks. The most common targets for SQL injection attacks were retail, travel and media websites. Finally, another attack vector that’s just now starting to make an impact is domain hijacking. “People are actually attacking the registries and getting their own information put in, so the big sites are losing control of their DNS infrastructure,” Korbin said. There have been a few high-profile cases so far, he said, mostly politically motivated, but not yet enough data to measure a trend. “We didn’t see it much in 2012, started seeing a little bit of it in 2013 and 2014, and seeing it more of it now,” he said. He recommended that companies switch on two-factor authentication for their email systems when available, ensure that employees don’t reuse credentials, ask their domain registrars to put a lock on their domains, and, finally, keep a close eye on traffic numbers to spot a drop-off as soon as it happens. With these domain redirects, the attackers are not only able to shut down the legitimate website, but also put up their own content under that website’s brand. Source: http://www.csoonline.com/article/2923832/business-continuity/ddos-reflection-attacks-are-back-and-this-time-its-personal.html

Category Archives: DDoS Criminals

How organisations can eliminate the DDoS attack ‘blind spot’

Most DDoS defence solutions are missing critical parts of the threat landscape thanks to a lack of proper visibility. Online organisations need to take a closer look at the problem of business disruption resulting from the external DDoS attacks that every organisation is unavoidably exposed to when they connect to an unsecured or ‘raw’ Internet feed. Key components of any realistic DDoS defense strategy are proper visualisation and analytics into these security events. DDoS event data allows security teams to see all threat vectors associated with an attack – even complex hybrid attacks that are well disguised in order to achieve the goal of data exfiltration. Unfortunately, many legacy DDoS defense solutions are not focused on providing visibility into all layers of an attack and are strictly tasked with looking for flow peaks on the network. If all you are looking for is anomalous bandwidth spikes, you may be missing critical attack vectors that are seriously compromising your business. In the face of this new cyber-risk, traditional approaches to network security are proving ineffective. The increase in available Internet bandwidth, widespread access to cyber-attack software tools and ‘dark web’ services for hire, has led to a rapid evolution of increasingly sophisticated DDoS techniques used by cyber criminals to disrupt and exploit businesses around the world. DDoS as a diversionary tactic Today, DDoS attack techniques are more commonly employed by attackers to do far more than deny service. Attack attempts experienced by Corero’s protected customers in Q4 2014 indicate that short bursts of sub-saturating DDoS attacks are becoming more of the norm. The recent DDoS Trends and Analysis report indicates that 66% of attack attempts targeting Corero customers were less than 1Gbps in peak bandwidth utilisation, and were under five minutes in duration. Clearly this level of attack is not a threat to disrupt service for the majority of online entities. And yet the majority of attacks utilising well known DDoS attack vectors fit this profile. So why would a DDoS attack be designed to maintain service availability if ‘Denial of Service’ is the true intent? What’s the point if you aren’t aiming to take an entire IT infrastructure down, or wipe out hosted customers with bogus traffic, or flood service provider environments with massive amounts of malicious traffic? Unfortunately, the answer is quite alarming. For organisations that don’t take advantage of in-line DDoS protection positioned at the network edge, these partial link saturation attacks that occur in bursts of short duration, enter the network unimpeded and begin overwhelming traditional security infrastructure. In turn, this activity stimulates un-necessary logging of DDoS event data, which may prevent the logging of more important security events and sends the layers of the security infrastructure into a reboot or fall back mode. These attacks are sophisticated enough to leave just enough bandwidth available for other multi-vector attacks to make their way into the network and past weakened network security layers undetected. There would be little to no trace of these additional attack vectors infiltrating the compromised network, as the initial DDoS had done its job—distract all security resources from performing their intended functions. Multi-vector and adaptive DDoS attack techniques are becoming more common Many equate DDoS with one type of attack vector – volumetric. It is not surprising, as these high bandwidth-consuming attacks are easier to identify, and defend against with on-premises or cloud based anti-DDoS solutions, or a combination of both. The attack attempts against Corero’s customers in Q4 2014 not only employed brute force multi-vector DDoS attacks, but there was an emerging trend where attackers have implemented more adaptive multi-vector methods to profile the nature of the target network’s security defenses, and subsequently selected a second or third attack designed to circumvent an organisation’s layered protection strategy. While volumetric attacks remain the most common DDoS attack type targeting Corero customers, combination or adaptive attacks are emerging as a new threat vector. Empowering security teams with DDoS visibility As the DDoS threat landscape evolves, so does the role of the security team tasked with protecting against these sophisticated and adaptive attacks. Obtaining clear visibility into the attacks lurking on the network is rapidly becoming a priority for network security professionals. The Internet connected business is now realising the importance of security tools that offer comprehensive visibility from a single analysis console or ‘single pane of glass’ to gain a complete understanding of the DDoS attacks and cyber threats targeting their Internet-facing services. Dashboards of actionable security intelligence can expose volumetric DDoS attack activity, such as reflection, amplification, and flooding attacks. Additionally, insight into targeted resource exhaustion attacks, low and slow attacks, victim servers, ports, and services as well as malicious IP addresses and botnets is mandatory. Unfortunately, most attacks of these types typically slide under the radar in DDoS scrubbing lane solutions, or go completely undetected by cloud based DDoS protection services, which rely on coarse sampling of the network perimeter. Extracting meaningful information from volumes of raw security events has been a virtual impossibility for all but the largest enterprises with dedicated security analysts. Next generation DDoS defense solutions can provide this capability in a turn-key fashion to organisations of all sizes. By combining high-performance in-line DDoS event detection and mitigation capabilities with sophisticated event data analysis in a state-of-the-art big data platform, these solutions can quickly find the needles in the haystack of security events. With the ability to uncover hidden patterns of data, identify emerging vulnerabilities within the massive streams of DDoS attack and security event data, and respond decisively with countermeasures, next-generation DDoS first line of defense solutions provide security teams with the tools required to better protect their organization against the dynamic DDoS threat landscape. Source: http://www.information-age.com/technology/security/123459482/how-organisations-can-eliminate-ddos-attack-blind-spot

Read this article:

How organisations can eliminate the DDoS attack ‘blind spot’

TRD Admin On The Ransom DDoS That Is Hitting The Dark Net Markets

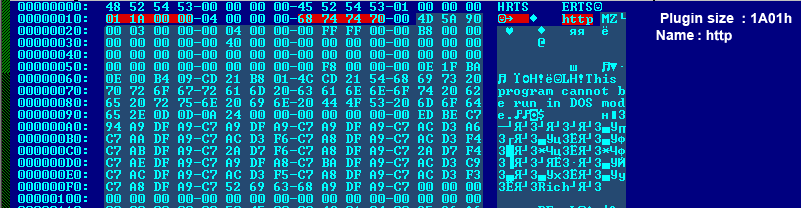

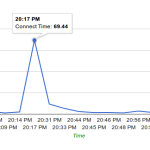

The admin of Therealdeal market ( http://trdealmgn4uvm42g.onion/ ) provided us with some insights about the recent DDo’s attacks that are hitting all the major DNM’s in the past week: In the past few days, it seems like almost every DN market is being hit by DDoS attacks. Our logs show huge amounts of basic http requests aiming for dynamic pages, probably in attempt to (ab)use as many resources as possible on the server side, for example by requesting for pages that execute many sql queries or generate captcha codes. As we are security oriented we manged to halt the attack on our servers the moment it showed up in the logs. Although this required fast thinking, due to the fact that dealing with this kind of attack over tor is not the same as dealing with such attack over clearnet. New addresses? Shifting Pages? Waiting? All these did not work for other markets… Here you can see the beginning and failure, as caught by Dnstats: As you can see, our market’s response time spiked to almost 70 seconds while our market’s usual response time is insanely fast, almost like most clearnet sites. But also, you can see that the response time was back to 2-3 seconds a little after. Here is an example of a darknet market that didn’t know how to combat this problem: The flat line at 0 seconds meaning there was no response from the server. The Problem As opposed to cleanet attacks, where mitigation steps could be taken by simply blocking the offending IP addresses,when it comes to tor, the requests are coming from the localhost (127.0.0.1) IP address as everything is tunneled through tor. Another problem is the fact that the attackers are using the same user-agent of tor browser – hence we cannot drop packets based on UA strings. The attackers are also aiming for critical pages of our site – for example the captcha generation page. Removing this page will not allow our users to login, or will open the site to bruteforce attempts. Renaming this page just made them aim for the new url (almost instantly, seems very much automated). One of the temporary solutions was to run a script that constantly renamed and re-wrote the login page after 1 successful request for a captcha… Attacks then turned into POST requests aiming for the login page. Solutions If you are a DNM owner or just the security admin, check your webserver logs. There is something unique in the HTTP requests, maybe a string asking you to pay to a specific address. (assuming these are the same offenders). Otherwise there might be something else … Hint: you might need to load tcpdump during an attack. Hopefully, you are not using some kind of VPS and have your own dedicated servers and proxy servers. Or if you are using some shit VPS, then hopefully you are using KVM or XEN. (first reason being the memory is leakable and accessible by any other user of the same service). The other reason is – control on the kernel level. You can drop packets containing specific strings by using iptables, or use regex too. This is one example of a commad that we executed (amongst others) to get rid of the offenders, we cannot specify all of them, so be creative! iptables -A INPUT -p tcp –dport 80 -m string –algo bm –string “(RANSOM_BITCOIN_ADDRESS)” -j DROP Where (RANSOM_BITCOIN_ADDRESS) is the unique part of the request… To Other Market Admins: There are additional things to be done, but if we expose them, this will only start a cat and mouse game with these attackers. If you are a DNM admin feel free to sign up as a buyer at TheRealDeal Market and send us a message (including your commonly used PGP), since at the end of the day even though you might see us a competitor in a way, there are some things (like people stuck without their pain medication from mexico) that are priceless… Source: http://www.deepdotweb.com/2015/05/11/this-is-the-ransom-ddos-that-is-hitting-the-dark-net-markets/

The admin of Therealdeal market ( http://trdealmgn4uvm42g.onion/ ) provided us with some insights about the recent DDo’s attacks that are hitting all the major DNM’s in the past week: In the past few days, it seems like almost every DN market is being hit by DDoS attacks. Our logs show huge amounts of basic http requests aiming for dynamic pages, probably in attempt to (ab)use as many resources as possible on the server side, for example by requesting for pages that execute many sql queries or generate captcha codes. As we are security oriented we manged to halt the attack on our servers the moment it showed up in the logs. Although this required fast thinking, due to the fact that dealing with this kind of attack over tor is not the same as dealing with such attack over clearnet. New addresses? Shifting Pages? Waiting? All these did not work for other markets… Here you can see the beginning and failure, as caught by Dnstats: As you can see, our market’s response time spiked to almost 70 seconds while our market’s usual response time is insanely fast, almost like most clearnet sites. But also, you can see that the response time was back to 2-3 seconds a little after. Here is an example of a darknet market that didn’t know how to combat this problem: The flat line at 0 seconds meaning there was no response from the server. The Problem As opposed to cleanet attacks, where mitigation steps could be taken by simply blocking the offending IP addresses,when it comes to tor, the requests are coming from the localhost (127.0.0.1) IP address as everything is tunneled through tor. Another problem is the fact that the attackers are using the same user-agent of tor browser – hence we cannot drop packets based on UA strings. The attackers are also aiming for critical pages of our site – for example the captcha generation page. Removing this page will not allow our users to login, or will open the site to bruteforce attempts. Renaming this page just made them aim for the new url (almost instantly, seems very much automated). One of the temporary solutions was to run a script that constantly renamed and re-wrote the login page after 1 successful request for a captcha… Attacks then turned into POST requests aiming for the login page. Solutions If you are a DNM owner or just the security admin, check your webserver logs. There is something unique in the HTTP requests, maybe a string asking you to pay to a specific address. (assuming these are the same offenders). Otherwise there might be something else … Hint: you might need to load tcpdump during an attack. Hopefully, you are not using some kind of VPS and have your own dedicated servers and proxy servers. Or if you are using some shit VPS, then hopefully you are using KVM or XEN. (first reason being the memory is leakable and accessible by any other user of the same service). The other reason is – control on the kernel level. You can drop packets containing specific strings by using iptables, or use regex too. This is one example of a commad that we executed (amongst others) to get rid of the offenders, we cannot specify all of them, so be creative! iptables -A INPUT -p tcp –dport 80 -m string –algo bm –string “(RANSOM_BITCOIN_ADDRESS)” -j DROP Where (RANSOM_BITCOIN_ADDRESS) is the unique part of the request… To Other Market Admins: There are additional things to be done, but if we expose them, this will only start a cat and mouse game with these attackers. If you are a DNM admin feel free to sign up as a buyer at TheRealDeal Market and send us a message (including your commonly used PGP), since at the end of the day even though you might see us a competitor in a way, there are some things (like people stuck without their pain medication from mexico) that are priceless… Source: http://www.deepdotweb.com/2015/05/11/this-is-the-ransom-ddos-that-is-hitting-the-dark-net-markets/

Read More:

TRD Admin On The Ransom DDoS That Is Hitting The Dark Net Markets

Hacker Group DD4BC New DDos Attacks

DD4BC Launches New Wave Of DDoS Attacks The extortionist group DD4BC is believed to be connected to a new wave of distributed denial of service (DDoS) attacks against organizations based in Australia, New Zealand, and Switzerland. The group is asking for 25 BTC from those affected in exchange for giving up the flood of inbound data that has resulted in the recipient sites becoming inaccessible. Recently, DD4BC was mentioned in a warning published by the Swiss Governmental Computer Emergency Response Team (GovCERT). GovCERT is a branch of MELANI, a national agency that deals with cyber security issues. The warning read: “In the past days MELANI / GovCERT.ch has received several requests regarding a distributed denial of service (DDoS) extortion campaign related to ‘DD4BC’.” As per the New Zealand government, the extortion attempts seemingly begin with a short DDoS attack that is meant to reflect the possible impact after the ransom demand has been made. DD4BC has been linked to previous attacks on digital currency websites and businesses. The attacks include extortion attempts made against various well-known mining pool operators. GovCERT confirmed that it had so far received reports from several high profile targets, stating that some of the organizations were the victims of a wave of DDoS attacks. DD4BC’s activity has been on the rise recently, with the new wave of attacks beginning at the start of March. “ While these attacks have targeted foreign organizations in the past months, we have seen an increase of activity of DD4BC in Europe recently. Since earlier this week, the DD4BC Team expanded their operation to Switzerland, ” stated GovCERT. GovCERT also asked those affected by the attacks to not pay the ransom. Rather the agency has advised victims to file a police report and seek additional mitigation support from their Internet service provider. The news of the New Zealand attacks became public at the start of May after the New Zealand National Cyber Security Centre (NCSC) issued a warning regarding DDoS attacks on local organizations. While the agency did not specify who the perpetrator behind the attacks was, it did confirm that an investigation into the attacks was ongoing. Barry Brailey, chairman of Cybersecurity nonprofit New Zealand Internet Task Force, confirmed the link between DD4BC and the recent DDoS attacks in New Zealand. “ Yes, [the series of attacks] appears to be linked to the group/moniker ‘DD4BC’, ” he said. Other companies who have fallen victim to the group include BitBay, BitQuick, Coin Telegraph, Expresscoin, and Bitalo- who created a 100 BTC bounty after it was attacked. Source: http://bitcoinvox.com/article/1674/hacker-group-dd4bc-new-ddos-attacks

Read the original:

Hacker Group DD4BC New DDos Attacks

DDoS attacks threatens New Zealand organisations

The New Zealand Internet Task Force (NZITF) advises that an unknown international group has this week begun threatening New Zealand organisations with Distributed Denial of Service (DDoS) attacks. DDoS attacks are attempts to make an organisation’s Internet links or network unavailable to its users for an extended length of time. This latest DDoS threat appears as an email threatening to take down an organisation’s Internet links unless substantial payments in the digital currency Bitcoin are made. New Zealand Internet Task Force (NZITF) Chair Barry Brailey warns the threat is not an idle one and should be taken extremely seriously as the networks of some New Zealand organisations have already been targetted. “The networks of at least four New Zealand organisations that NZITF knows of have been affected, so far. A number of Australian organisations have also been affected,” he says. “This unknown group of criminals have been sending emails to a number of addresses within an organisation. Sometimes these are support or helpdesk addresses, other times they are directed at individuals. The emails contain statements threatening DDoS, such as: “Your site is going under attack unless you pay 25 Bitcoin.”, “We are aware that you probably don’t have 25 BTC at the moment, so we are giving you 24 hours.” or “IMPORTANT: You don’t even have to reply. Just pay 25 BTC to [bitcoin address] – we will know it’s you and you will never hear from us again.” The emails may also provide links to news articles about other attacks the group has conducted. NZITF urges New Zealand firms and organisations to be on the alert. They also suggest that targeted entities don’t pay as even if this stops a current attack, it makes your organisation a likely target for future exploitation as you have a history of making payments. It is also advisable staff be educated and be on the lookout for any emails matching the descriptions above. Have them alert appropriate security personnel within the organisation as soon as possible. Source: http://www.geekzone.co.nz/content.asp?contentid=18336

See the original post:

DDoS attacks threatens New Zealand organisations

Community college targeted ongoing DDoS attack

Walla Walla Community College is under cyberattack this week by what are believed to be foreign computers that have jammed the college’s Internet systems. Bill Storms, technology director, described it as akin to having too many cars on a freeway, causing delays and disruption to those wanting to connect to the college’s website. The type of attack is a distributed denial of service, or DDoS. They’re often the result of hundreds or even thousands of computers outside the U.S. that are programed with viruses that continually connect to and overload targeted servers. Storms said bandwidth monitors noticed the first spike of attacks on Sunday. To stop the attacks, college officials have had to periodically shut down the Web connection while providing alternative working Internet links to students and staff. The fix, so far, has only been temporary as the problem often returns the next day. “We think we have it under control in the afternoon. And we have a quiet period,” Storm said. “And then around 9 a.m. it all comes in again.” Walla Walla Community College may not be the only victim of the DDoS attack. Storm said he was informed that as many as 39 other state agencies have been the target of similar DDoS attacks. As for the reason for the attack, none was given to college officials. Storms noted campus operators did receive a number of unusual phone calls where the callers said that they were in control of the Internet. But no demands were made. “Some bizarre phone calls came in, and I don’t know whether to take them serious or not,” Storms said. State officials have been contacted and are aiding the college with the problem. Storms said they have idea how long the DDoS attack will last. Source: http://union-bulletin.com/news/2015/apr/30/community-college-targeted-ongoing-cyberattack/

Continued here:

Community college targeted ongoing DDoS attack

FBI investigating Rutgers University in DDoS attack

The FBI is working with Rutgers University to identify the source of a series of distributed denial-of-service (DDoS) attacks that have plagued the school this week. The assault began Monday morning and took down internet service across the campus according to NJ.com. Some professors had to cancel classes and students were unable to enroll, submit assignments or take finals since Wi-fi service and email have been affected as has an online resource called Sakai. This is the second DDoS attack on the university this month and the third since November. Authorities and the Rutgers Office of Information and Technology (OIT) haven’t released any details thus far about the possible source of the attacks. Currently, only certain parts of the university have internet service. The school will make frequent updates on to the Rutgers website about its progress in restoring service. Source: http://www.scmagazine.com/the-fbi-is-helpign-rutger-inveigate-a-series-of-ddos-attack/article/412149/

See the original post:

FBI investigating Rutgers University in DDoS attack

One fifth of DDoS attacks last over a day

Some 20 per cent of DDoS attacks have lasting damage that can see them taking a site down for 24 hours or more, according to research by Kaspersky. In fact, almost a tenth of the companies surveyed said their systems were down for several weeks or longer, while less than a third said they had disruption lasting less than an hour. The investigation revealed that the majority of attacks (65 per cent) caused severe delays or complete disruption, while only a third caused no disruption at all. Evgeny Vigovsky, head of Kaspersky DDoS Protection, said: “For companies, losing a service completely for a short time, or suffering constant delays in accessing it over several days, can be equally serious problems. “Both situations can impact customer satisfaction and their willingness to use the same service in the future. Using reliable security solutions to protect against DDoS attacks enables companies to give their customers uninterrupted access to online services, regardless of whether they are facing a powerful short-term assault or a weaker but persistent long-running campaign.” The company highlighted an attack on Github at the end of March when Chinese hackers brought the site down. That attack lasted 118 hours and demonstrated that even large communities are at risk. Last month, another study by Kaspersky revealed that only 37 per cent of companies were prepared for a DDoS attack, despite 26 per cent of them being concerned the problems caused by such attacks were long-term, meaning they could lose current or prospective clients as a result. Source: http://www.itpro.co.uk/security/24514/one-fifth-of-ddos-attacks-last-over-a-day

Featured article: How to use a CDN properly and make your website faster

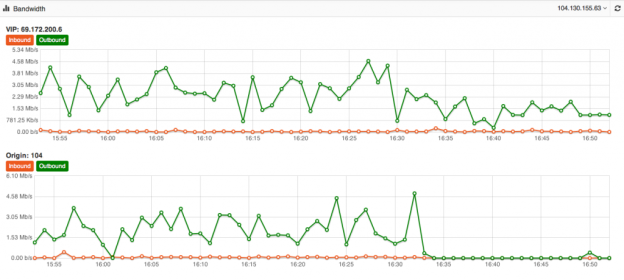

Its one of the biggest mysteries to me I have seen in my 15+ years of Internet hosting and cloud based services. The mystery is, why do people use a Content Delivery Network for their website yet never fully optimize their site to take advantage of the speed and volume capabilities of the CDN. Just because you use a CDN doesn’t mean your site is automatically faster or even able to take advantage of its ability to dish out mass amounts of content in the blink of an eye. At DOSarrest I have seen the same mystery continue, this is why I have put together this piece on using a CDN and hopefully help those who wish to take full advantage of a CDN. Most of this information is general and can be applied to using any CDN but I’ll also throw in some specifics that relate to DOSarrest. Some common misconceptions about using a CDN As soon as I’m configured to use a CDN my site will be faster and be able to handle a large amount of web visitors on demand. Website developers create websites that are already optimized and a CDN won’t really change much. There’s really nothing I can do to make my website run faster once its on a CDN. All CDN’s are pretty much the same. Here’s what I have to say about the misconceptions noted above In most cases the answer to this is…. NO !! If the CDN is not caching your content your site won’t be faster, in fact it will probably be a little slower, as every request will have to go from the visitor to the CDN which will in turn go and fetch it from your server then turn around and send the response back to the visitor. In my opinion and experience website developers in general do not optimize websites to use a CDN. In fact most websites don’t even take full advantage of a browsers’ caching capability. As the Internet has become ubiquitously faster, this fine art has been left by the wayside in most cases. Another reason I think this has happened is that websites are huge, complex and a lot of content is dynamically generated coupled with very fast servers with large amounts of memory. Why spend time on optimizing caching, when a fast server will overcome this overhead. Oh yes you can and that’s why I have written this piece…see below No they aren’t. Many CDN’s don’t want you know how things are really working from every node that they are broadcasting your content from. You have to go out and subscribe to a third party service, if you have to get a third party service, do it, it can be fairly expensive but well worth it. How else will you know how your site is performing from other geographic regions. A good CDN should let you know the following in real-time but many don’t. Number of connections/requests between the CDN and Visitors. Number of connections/requests between the CDN and your server (origin). You want try and have the number of requests to your server to be less than the number of requests from the CDN to your visitors. *Tip- Use HTTP 1.1 on both “a” & “b” above and try and extend the keep-alive time on the origin to CDN side Bandwidth between the CDN and Internet visitors Bandwidth between the CDN and your server (origin) *Tip – If bandwidth of “c” and “d” are about the same, news flash…You can make things better. Cache status of your content (how many requests are being served by the CDN) *Tip – This is the best metric to really know if you are using your CDN properly. Performance metrics from outside of the CDN but in the same geographic region *Tip- Once you have the performance metrics from several different geographic regions you can compare the differences once you are on a CDN, your site should load faster the further away the region is located from your origin server, if you’re caching properly. For the record DOSarrest provides all of the above in real-time and it’s these tools I’ll use to explain on how to take full advantage of any CDN but without any metrics there’s no scientific way to know you’re on the right track to making your site super fast. There are five main groups of cache control tags that will effect how and what is cached. Expires : When attempting to retrieve a resource a browser will usually check to see if it already has a copy available for reuse. If the expires date has past the browser will download the resource again. Cache-control : HTTP 1.1 this expands on the functionality offered by Expires. There are several options available for the cache control header: – Public : This resource is cacheable. In the absence of any contradicting directive this is assumed. – Private : This resource is cachable by the end user only. All intermediate caching devices will treat this resource as no-cache. – No-cache : Do not cache this resource. – No-store : Do not cache, Do not store the request, I was never here – we never spoke. Capiche? – Must-revalidate : Do not use stale copies of this resource. – Proxy-revalidate : The end user may use stale copies, but intermediate caches must revalidate. – Max-age : The length of time (in seconds) before a resource is considered stale. A response may include any combination of these headers, for example: private, max-age=3600, must-revalidate. X-Accel-Expires : This functions just like the Expires header, but is only intended for proxy services. This header is intended to be ignored by browsers, and when the response traverses a proxy this header should be stripped out. Set-Cookie : While not explicitly specifying a cache directive, cookies are generally designed to hold user and/or session specific information. Caching such resources would have a negative impact on the desired site functionality. Vary : Lists the headers that should determine distinct copies of the resource. Cache will need to keep a separate copy of this resource for each distinct set of values in the headers indicated by Vary. A Vary response of “ * “ indicates that each request is unique. Given that most websites in my opinion are not fully taking advantage of caching by a browser or a CDN, if you’re using one, there is still a way around this without reviewing and adjusting every cache control header on your website. Any CDN worth its cost as well as any cloud based DDoS protection services company should be able to override most website cache-control headers. For demonstration purposes we used our own live website DOSarrest.com and ran a traffic generator so as to stress the server a little along with our regular visitor traffic. This demonstration shows what’s going on, when passing through a CDN with respect to activity between the CDN and the Internet visitor and the CDN and the customers server on the back-end. At approximately 16:30 we enabled a feature on DOSarrest’s service we call “Forced Caching” What this does is override in other words ignore some of the origin servers cache control headers. These are the results: Notice that bandwidth between the CDN and the origin (second graph) have fallen by over 90%, this saves resources on the origin server and makes things faster for the visitor. This is the best graphic illustration to let you know that you’re on the right track. Cache hits go way up, not cached go down and Expired and misses are negligible. The graph below shows that the requests to the origin have dropped by 90% ,its telling you the CDN is doing the heavy lifting. Last but not least this is the fruit of your labor as seen by 8 sensors in 4 geographic regions from our Customer “ DEMS “ portal. The site is running 10 times faster in every location even under load !

Its one of the biggest mysteries to me I have seen in my 15+ years of Internet hosting and cloud based services. The mystery is, why do people use a Content Delivery Network for their website yet never fully optimize their site to take advantage of the speed and volume capabilities of the CDN. Just because you use a CDN doesn’t mean your site is automatically faster or even able to take advantage of its ability to dish out mass amounts of content in the blink of an eye. At DOSarrest I have seen the same mystery continue, this is why I have put together this piece on using a CDN and hopefully help those who wish to take full advantage of a CDN. Most of this information is general and can be applied to using any CDN but I’ll also throw in some specifics that relate to DOSarrest. Some common misconceptions about using a CDN As soon as I’m configured to use a CDN my site will be faster and be able to handle a large amount of web visitors on demand. Website developers create websites that are already optimized and a CDN won’t really change much. There’s really nothing I can do to make my website run faster once its on a CDN. All CDN’s are pretty much the same. Here’s what I have to say about the misconceptions noted above In most cases the answer to this is…. NO !! If the CDN is not caching your content your site won’t be faster, in fact it will probably be a little slower, as every request will have to go from the visitor to the CDN which will in turn go and fetch it from your server then turn around and send the response back to the visitor. In my opinion and experience website developers in general do not optimize websites to use a CDN. In fact most websites don’t even take full advantage of a browsers’ caching capability. As the Internet has become ubiquitously faster, this fine art has been left by the wayside in most cases. Another reason I think this has happened is that websites are huge, complex and a lot of content is dynamically generated coupled with very fast servers with large amounts of memory. Why spend time on optimizing caching, when a fast server will overcome this overhead. Oh yes you can and that’s why I have written this piece…see below No they aren’t. Many CDN’s don’t want you know how things are really working from every node that they are broadcasting your content from. You have to go out and subscribe to a third party service, if you have to get a third party service, do it, it can be fairly expensive but well worth it. How else will you know how your site is performing from other geographic regions. A good CDN should let you know the following in real-time but many don’t. Number of connections/requests between the CDN and Visitors. Number of connections/requests between the CDN and your server (origin). You want try and have the number of requests to your server to be less than the number of requests from the CDN to your visitors. *Tip- Use HTTP 1.1 on both “a” & “b” above and try and extend the keep-alive time on the origin to CDN side Bandwidth between the CDN and Internet visitors Bandwidth between the CDN and your server (origin) *Tip – If bandwidth of “c” and “d” are about the same, news flash…You can make things better. Cache status of your content (how many requests are being served by the CDN) *Tip – This is the best metric to really know if you are using your CDN properly. Performance metrics from outside of the CDN but in the same geographic region *Tip- Once you have the performance metrics from several different geographic regions you can compare the differences once you are on a CDN, your site should load faster the further away the region is located from your origin server, if you’re caching properly. For the record DOSarrest provides all of the above in real-time and it’s these tools I’ll use to explain on how to take full advantage of any CDN but without any metrics there’s no scientific way to know you’re on the right track to making your site super fast. There are five main groups of cache control tags that will effect how and what is cached. Expires : When attempting to retrieve a resource a browser will usually check to see if it already has a copy available for reuse. If the expires date has past the browser will download the resource again. Cache-control : HTTP 1.1 this expands on the functionality offered by Expires. There are several options available for the cache control header: – Public : This resource is cacheable. In the absence of any contradicting directive this is assumed. – Private : This resource is cachable by the end user only. All intermediate caching devices will treat this resource as no-cache. – No-cache : Do not cache this resource. – No-store : Do not cache, Do not store the request, I was never here – we never spoke. Capiche? – Must-revalidate : Do not use stale copies of this resource. – Proxy-revalidate : The end user may use stale copies, but intermediate caches must revalidate. – Max-age : The length of time (in seconds) before a resource is considered stale. A response may include any combination of these headers, for example: private, max-age=3600, must-revalidate. X-Accel-Expires : This functions just like the Expires header, but is only intended for proxy services. This header is intended to be ignored by browsers, and when the response traverses a proxy this header should be stripped out. Set-Cookie : While not explicitly specifying a cache directive, cookies are generally designed to hold user and/or session specific information. Caching such resources would have a negative impact on the desired site functionality. Vary : Lists the headers that should determine distinct copies of the resource. Cache will need to keep a separate copy of this resource for each distinct set of values in the headers indicated by Vary. A Vary response of “ * “ indicates that each request is unique. Given that most websites in my opinion are not fully taking advantage of caching by a browser or a CDN, if you’re using one, there is still a way around this without reviewing and adjusting every cache control header on your website. Any CDN worth its cost as well as any cloud based DDoS protection services company should be able to override most website cache-control headers. For demonstration purposes we used our own live website DOSarrest.com and ran a traffic generator so as to stress the server a little along with our regular visitor traffic. This demonstration shows what’s going on, when passing through a CDN with respect to activity between the CDN and the Internet visitor and the CDN and the customers server on the back-end. At approximately 16:30 we enabled a feature on DOSarrest’s service we call “Forced Caching” What this does is override in other words ignore some of the origin servers cache control headers. These are the results: Notice that bandwidth between the CDN and the origin (second graph) have fallen by over 90%, this saves resources on the origin server and makes things faster for the visitor. This is the best graphic illustration to let you know that you’re on the right track. Cache hits go way up, not cached go down and Expired and misses are negligible. The graph below shows that the requests to the origin have dropped by 90% ,its telling you the CDN is doing the heavy lifting. Last but not least this is the fruit of your labor as seen by 8 sensors in 4 geographic regions from our Customer “ DEMS “ portal. The site is running 10 times faster in every location even under load !

Follow this link:

Featured article: How to use a CDN properly and make your website faster

How Google saw the DDoS attack against Github and GreatFire

The recent DDoS attacks aimed at GreatFire, a website that exposes China's internet censorship efforts and helps users get access to their mirror-sites, and GitHub, the world's largest code hosting se…

Taken from:

How Google saw the DDoS attack against Github and GreatFire